What AI Can and Cannot Do: A Conversation on Capability and Limits

A grounded discussion on the limits of AI beyond the hype, from hallucination and bias to decision-making, accountability, and the growing challenge of maintaining human judgment in an increasingly automated world.

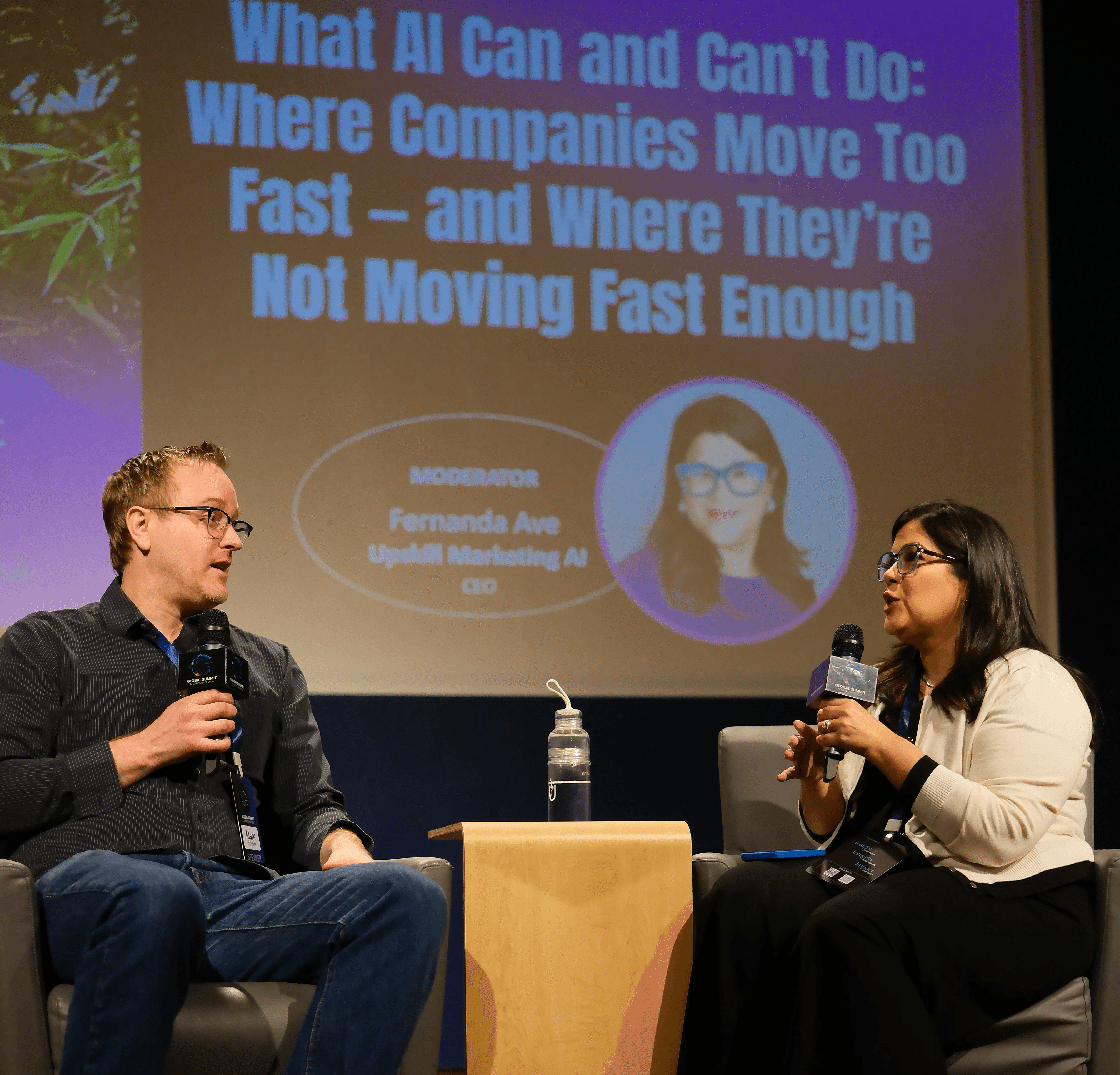

At Global Summit AI Vancouver 2026, a fireside conversation between Mark Schmidt and Fernanda Ave began with a deceptively simple question:

What can AI actually do in the real world, and where does it fall short?

Very quickly, the conversation moved beyond capability.

It became a discussion about limits, trade-offs, and responsibility.

The gap is not technical. It is expectation.

One of the clearest insights from the conversation is that many organizations are not struggling because AI is weak.

They are struggling because their expectations are misaligned with how these systems actually work.

AI systems are built on training data. That sounds obvious, but the implications are often overlooked:

They inherit bias

They perform unevenly outside familiar domains

They rarely say “I don’t know”

Instead, they produce answers that are coherent, confident, and sometimes completely wrong.

This is not a temporary bug.

Hallucination and bias are likely structural properties of the current paradigm, not problems that will simply disappear with the next model release.

If a product or business model assumes otherwise, the risk is already built in.

The most reliable use case today is not replacement

There is a persistent narrative that AI replaces jobs.

In practice, the more durable pattern looks different.

In a radiology example shared during the conversation, AI did not replace the specialist. It handled a large portion of report writing, allowing the human expert to move significantly faster while maintaining final control.

The outcome was not elimination. It was amplification.

More cases processed

Less backlog

Better use of scarce expertise

The strongest applications of AI today are not autonomous systems. They are systems that extend human capability.

Why so many AI projects never leave the demo stage

The technical demo often works.

The real world is where things break.

Two patterns show up repeatedly:

First, humans behave unpredictably.

Users will ask questions that were never anticipated. Edge cases are not rare. They are constant.

Second, systems can be pushed into failure.

With enough probing, they can be made to produce outputs that companies never intended.

This creates a gap between what is possible and what is deployable.

Technical viability does not equal operational safety.

Automation still needs judgment

There is a strong push to remove humans from the loop.

The conversation pushes back on that instinct.

Fully automated systems can fail in ways that are subtle, embarrassing, or costly. In some cases, they generate outputs that should never have been released.

The idea of using one agent to monitor another is appealing, but it introduces another layer of uncertainty rather than removing it.

In high-stakes contexts, human judgment is not a bottleneck. It is a safeguard.

The harder question: what should AI be doing at all

At a certain point, the discussion shifts.

Not from “can it do this” to “should it.”

In areas like predicting recidivism, AI systems may appear useful on the surface. But they carry deep risks:

Reinforcing bias

Encoding past inequities into future decisions

Even if performance improves, the underlying question remains:

Does delegating this decision to a machine make the system better or worse for people?

Capability alone is not a sufficient justification.

When everyone uses the same intelligence

Another phenomenon discussed is what some researchers call “Trend Slop.”

Different models, trained on similar data, often produce similar recommendations.

At scale, this leads to something more concerning:

Organizations making similar decisions

Hiring filters converging

Edge cases disappearing

If you fall slightly outside what the model considers “ideal,” you may not get rejected once.

You may get rejected everywhere.

Standardized intelligence can quietly remove human variability.

If AI is everywhere, where does advantage come from

AI lowers the barrier to entry.

More people can produce high-quality outputs. More teams can operate at a higher baseline.

That is the upside.

The tension is that it also reduces differentiation.

If everyone uses similar tools, trained on similar data, generating similar outputs, then advantage does not come from access anymore.

It comes from something harder to replicate:

Judgment

Context

Taste

Perspective

AI raises the floor. It also forces a rethink of what makes something distinctive.

A simple workflow that gets this right

One of the most practical moments in the conversation came from a writing example.

A non-native English speaker described her process:

She writes everything herself first.

Then uses AI to refine it.

Then rewrites it again to sound like herself.

The sequence matters.

AI is used to support expression, not replace it.

If the final output sounds identical to the machine, then the human contribution has already been lost.

The real question is not technical

By the end of the conversation, two tensions remain.

What is technically possible vs what can be responsibly deployed

Moving fast vs building with intention

These are not abstract questions. They show up in product decisions, hiring decisions, and company strategy.

AI changes what we can do.

It does not remove the need to decide what we should do, and who is accountable for it.

Continue the Conversation

If this conversation resonated, we invite you to stay connected with what we are building.

At Global Summit AI Vancouver 2026, we focus on conversations that go beyond surface-level narratives, looking closely at how AI is actually used, where it breaks, and what decisions matter in practice.

We will continue sharing insights like this as we prepare for our next edition.

You can subscribe below to receive future articles, updates, and early information on our Fall 2026 Summit.