Rethinking the AI Trajectory: Institutions, Incentives, and the Possibility of Abundance

As AI continues to advance, the real question may no longer be about capability, but direction. This article explores how institutions, incentives, and system design shape the future of AI, and why the path ahead may depend less on technology than on how we choose to use it.

Across recent discussions on artificial intelligence, a familiar pattern has emerged. Progress is often framed through speed, scale, and competitive advantage. Governments speak in terms of positioning. Companies emphasize capability and infrastructure. Within this narrative, the future appears as a race.

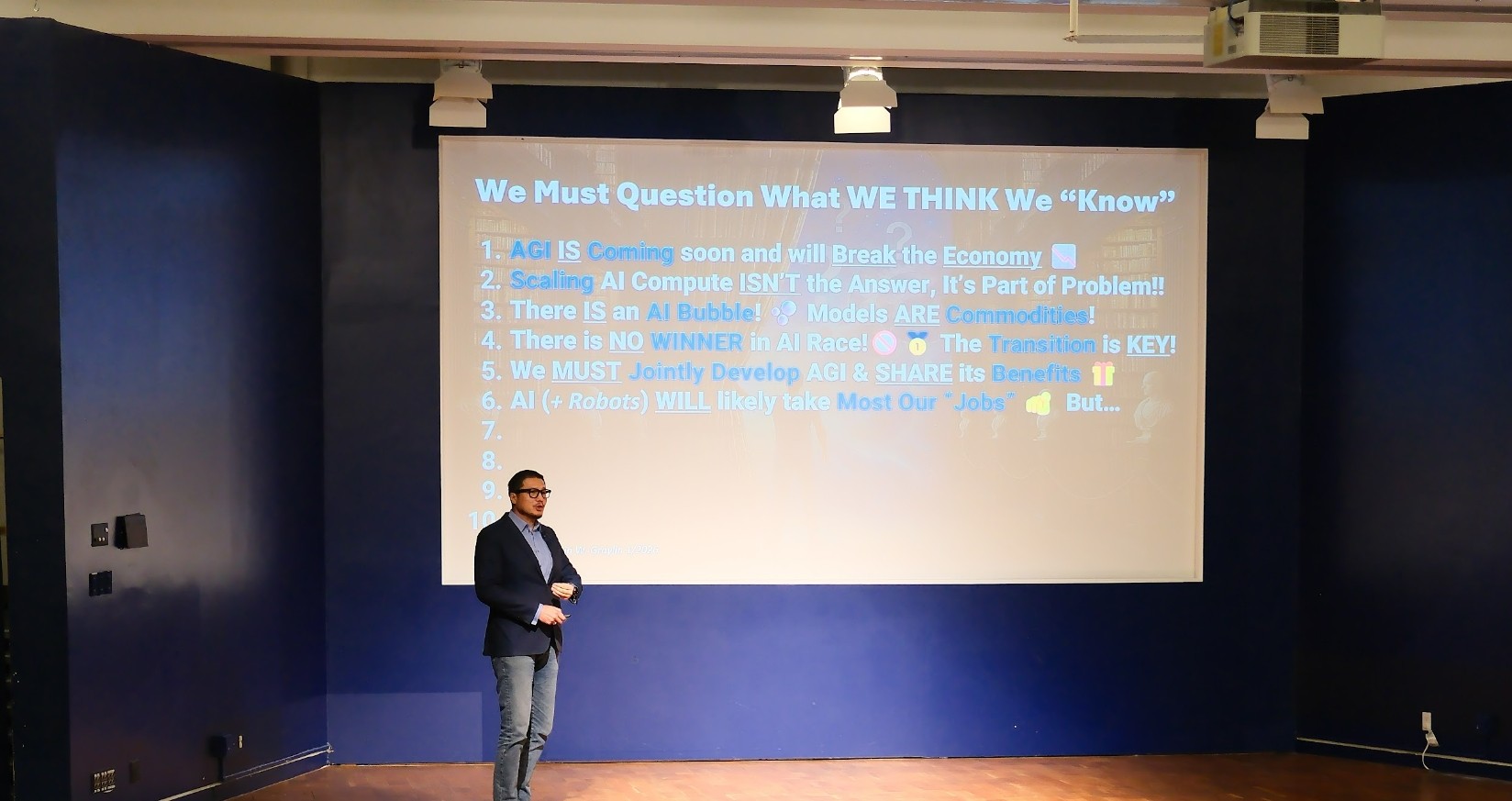

During a recent conversation at Global Summit AI Vancouver 2026, Alvin Wang Graylin offered a different perspective. His keynote moved away from model performance and toward a more structural question: what kinds of systems, incentives, and assumptions are shaping the direction of AI itself?

The Framing Problem: What Game Are We Playing?

Much of today’s thinking implicitly treats AI development as a competitive game. Advantage is assumed to be limited, and progress is measured by relative dominance. This framing carries over into policy, investment, and product strategy.

From a systems perspective, such an approach introduces tension. Artificial general intelligence, if realized, would represent a structural transition rather than a defined endpoint. Economic systems, labor markets, and governance frameworks would all need to adapt. When development is approached primarily through competition, short term optimization can conflict with long term stability.

Game theory offers a useful lens. Models centered on secrecy and unilateral gain align with competitive dynamics. Models that allow communication and repeated interaction tend to produce more stable outcomes over time. Current global behavior reflects elements of both, though competitive signaling continues to dominate.

The Limits of Scaling and the Emergence of Commoditization

Another assumption concerns the role of scale. Larger models, more compute, and greater volumes of data are widely viewed as the primary drivers of progress.

Recent developments suggest a more complex trajectory. Advances in efficiency, distillation, and agent based architectures are reducing dependence on scale alone. Capabilities that once required extensive infrastructure are increasingly accessible through smaller and more specialized systems.

As access broadens, frontier models begin to exhibit characteristics of commoditized technology. In such an environment, differentiation shifts away from raw capability and toward integration, distribution, and application specific design. The center of value creation moves accordingly.

Productivity, Labor, and the Question of Distribution

The economic impact of AI is frequently described in terms of productivity gains. In many domains, measurable improvements are already evident. However, productivity does not determine how outcomes are distributed.

A central issue concerns the relationship between capital and labor. As automation expands across cognitive tasks, a larger share of output may accrue to capital. Historical transitions of this nature often occur through threshold effects, where gradual improvements eventually enable large scale substitution.

Early signals are visible. Entry level roles have shown greater sensitivity to restructuring. Hiring patterns increasingly reflect a focus on efficiency, even in periods of strong corporate performance. These developments suggest that technical capability is beginning to translate into organizational change, although adoption remains uneven.

The question that follows is how gains are allocated. Without intentional mechanisms, increased productivity may coincide with widening disparities.

Diverging Economic Dynamics

Artificial intelligence introduces different price dynamics across sectors. Goods and services that can be digitized or automated tend to move toward lower marginal cost. At the same time, activities that depend on human presence, uniqueness, or constrained supply may experience increasing value.

This divergence creates a dual structure. One part of the economy moves toward abundance, characterized by accessibility and scale. Another retains elements of scarcity. Understanding this structure is essential for anticipating long term societal impact.

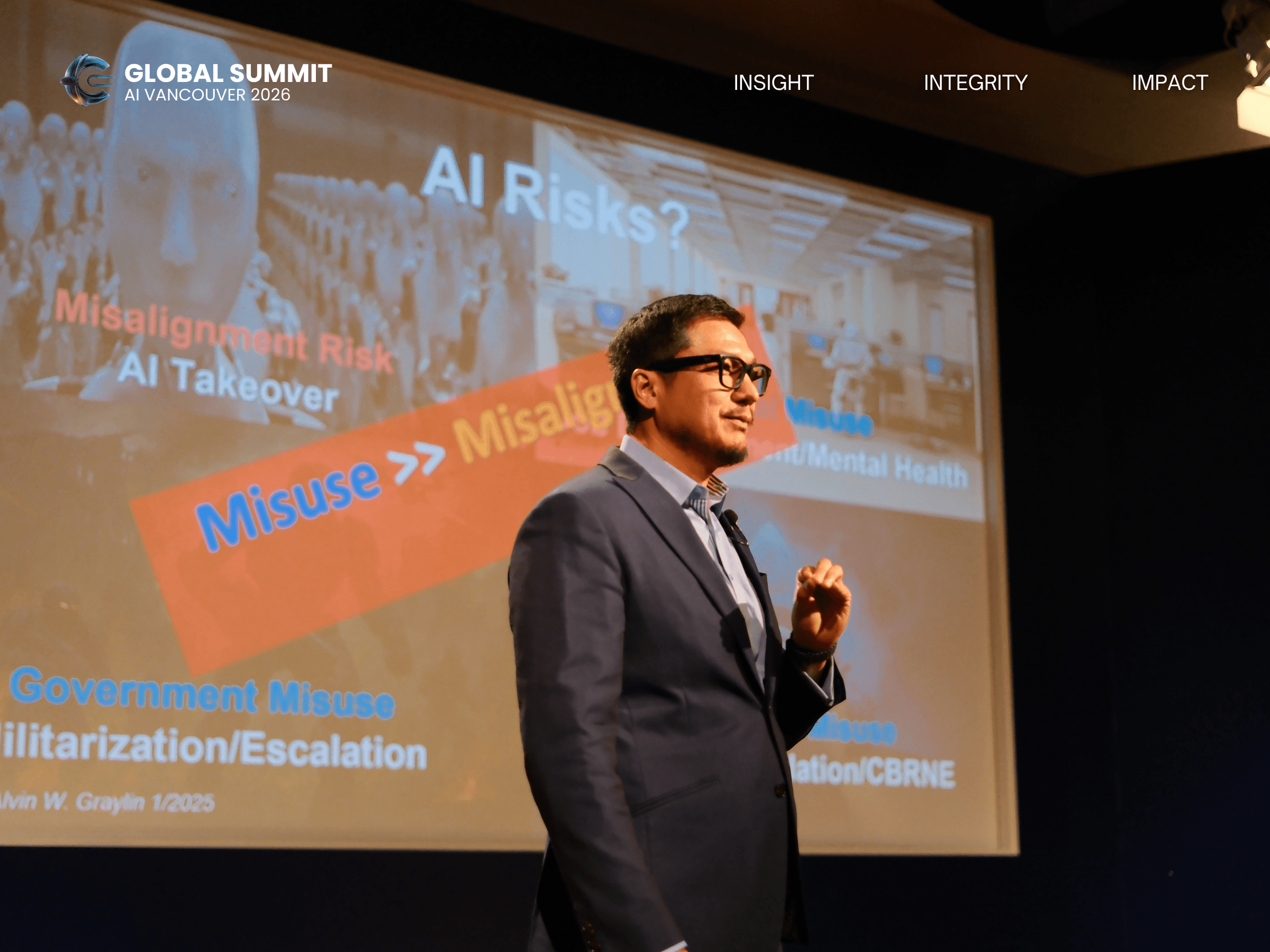

Beyond Technical Risk: Governance and Use

Public discussions of AI risk often center on alignment and hypothetical future scenarios. At the same time, more immediate risks arise from how current systems are deployed.

Misuse at individual, organizational, and governmental levels is already observable. Cybersecurity threats, information manipulation, and automated decision making in sensitive domains illustrate the impact of existing capabilities. These challenges are shaped by governance, norms, and incentives.

Addressing them requires coordination across institutions and a focus on real world deployment rather than abstract scenarios.

Rethinking Economic Models

Across history, economic systems have evolved alongside changes in resource constraints and production methods. Artificial intelligence has the potential to reduce constraints on knowledge, coordination, and certain forms of labor.

Graylin’s broader work explores the possibility of a transition toward a system in which scarcity plays a diminished role. Such a shift would challenge existing models built around competition for limited resources, and would require new approaches to value, incentives, and social organization.

This transition remains contingent. Technological capability creates the conditions. Institutional response shapes the outcome.

Implications for Organizations and Individuals

For organizations, barriers to effective AI adoption often arise from culture and structure rather than technology. Incentive alignment, process design, and leadership perspective play a central role.

For individuals, the evolving nature of work raises questions about stability and identity. Career paths defined by long term progression within large institutions may become less predictable. Other forms of contribution, including service oriented roles, skilled trades, and community based work, may gain renewed importance.

These shifts suggest a need for adaptability and a broader understanding of value.

A Question of Direction

The trajectory of AI remains open. Multiple outcomes are possible, ranging from concentrated control to more distributed and cooperative systems. The determining factors lie in how institutions evolve, how incentives are structured, and how collective decisions are made.

Conversations like this one reflect a growing recognition that the future of AI is shaped not only by what we build, but by how we choose to frame and deploy it.

Further Reading

“Beyond Rivalry: A US-China Policy Framework for the Age of Transformative AI”

https://www.digitalistpapers.com/vol2/graylin

“The Enterprise AI Playbook — Lessons from 51 Successful Deployments”

https://digitaleconomy.stanford.edu/app/uploads/2026/03/EnterpriseAIPlaybook_PereiraGraylinBrynjolfsson.pdf

“Abundanism: A New Philosophy for a Post-Scarcity World”

https://abundanist.substack.com/p/abundanism?triedRedirect=true

On a Broader Vision

For those interested in exploring these ideas more deeply, Graylin’s book Our Next Reality offers a broader perspective on how emerging technologies may reshape economic systems and human experience over time.