From research to real-world deployment, how responsibility is shaping the future of AI

As AI capabilities accelerate, responsibility is becoming central to how systems are built and used. This piece reflects on Amii’s approach to responsible AI and its implications for startups, research, and real-world adoption.

At the Global Summit AI Vancouver 2026, one idea surfaced consistently across sessions and conversations. As AI capabilities continue to advance, the central question is shifting. It is no longer only about what AI can do, but how it should be used, and what responsibility comes with that power.

Amii, the Alberta Machine Intelligence Institute, brought this discussion into focus through both their booth presence and their session on Responsible AI Use. As one of Canada’s three Centers of Excellence under the Pan-Canadian AI Strategy, Amii has built its reputation at the intersection of leading research and practical application. Their work spans reinforcement learning, interdisciplinary collaboration, and startup support, yet what stood out most at the Summit was their clear emphasis on responsibility as a foundation for progress.

Responsible AI begins with everyday use

Responsible AI is often framed as a concern for those building models. In practice, it starts much earlier, at the point where individuals and organizations use AI tools in their daily workflows.

This requires a clear understanding of what a tool is designed for and whether it is appropriate for the task. It also involves evaluating the potential consequences of incorrect outputs, particularly in situations where decisions carry real impact. Equally important is awareness of data exposure, especially when sensitive or proprietary information is involved.

What emerges from this perspective is a shift in mindset. AI is not simply an additional layer of capability that can be applied indiscriminately. It is a system that interacts with real-world outcomes, and each use carries implications beyond the immediate task.

Building AI systems requires deeper accountability

When organizations move from using AI to building AI systems, the level of responsibility expands significantly. Responsible AI principles must be integrated throughout the entire lifecycle of development and deployment.

Privacy and security need to be embedded from the start. Explainability becomes essential, particularly in fields such as healthcare, law, and finance where decisions must be understood and justified. Algorithmic fairness also plays a critical role, ensuring that systems do not reinforce or amplify existing biases.

These considerations extend beyond technical implementation. They sit at the intersection of engineering, policy, and ethics, requiring alignment with regulatory expectations and broader societal values.

Trust is becoming a defining constraint

A deeper tension underlying many Summit discussions is the growing gap between AI capability and public trust. While performance continues to improve, trust does not scale automatically.

As AI-related incidents become more visible, organizations are increasingly evaluated not only on what they build, but on how responsibly they deploy it. Trust is emerging as a defining constraint, shaping adoption, customer relationships, and long-term sustainability.

Amii’s work reflects this shift. Through collaboration, research, and ecosystem support, they contribute to an environment where innovation and accountability evolve together.

Supporting startups from research to real-world impact

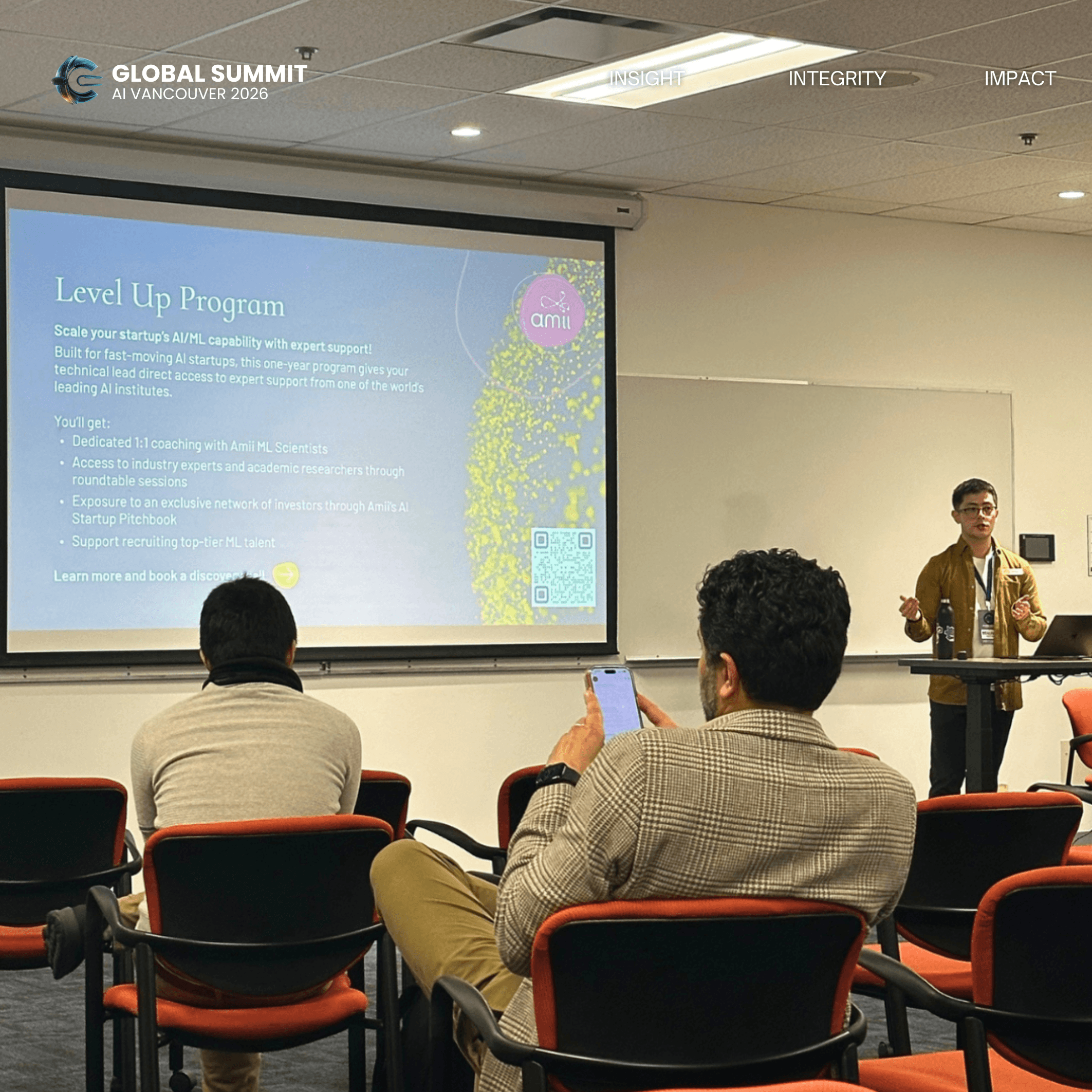

Amii’s commitment to real-world application is also reflected in programs such as the Level Up Program, designed to help startups scale their AI and machine learning capabilities.

Through this one-year program, technical leaders gain direct access to Amii’s ML scientists, along with opportunities to connect with industry experts, academic researchers, and investor networks. The support extends beyond technical guidance. It helps startups navigate hiring challenges, refine their AI strategy, and integrate machine learning into products in a way that is both practical and responsible.

This highlights an important evolution in the AI ecosystem. Success is no longer defined solely by model performance. It increasingly depends on the ability to translate research into systems that can operate reliably, ethically, and at scale.

From capability to responsibility

Amii’s presence at the Summit represents a broader shift in how AI is being developed and adopted. The future of AI will not be shaped by capability alone, but by the decisions made around its use, governance, and integration into society.

Responsible AI, in this context, is not a limitation. It is what enables AI to move from experimentation into infrastructure, and from potential into lasting impact.

Continue the Conversation

We will continue sharing ideas, conversations, and perspectives.

Subscribe below to stay connected with Global Summit Canada and be the first to hear about future gatherings.