From Models to Systems: What It Takes to Build Reliable AI

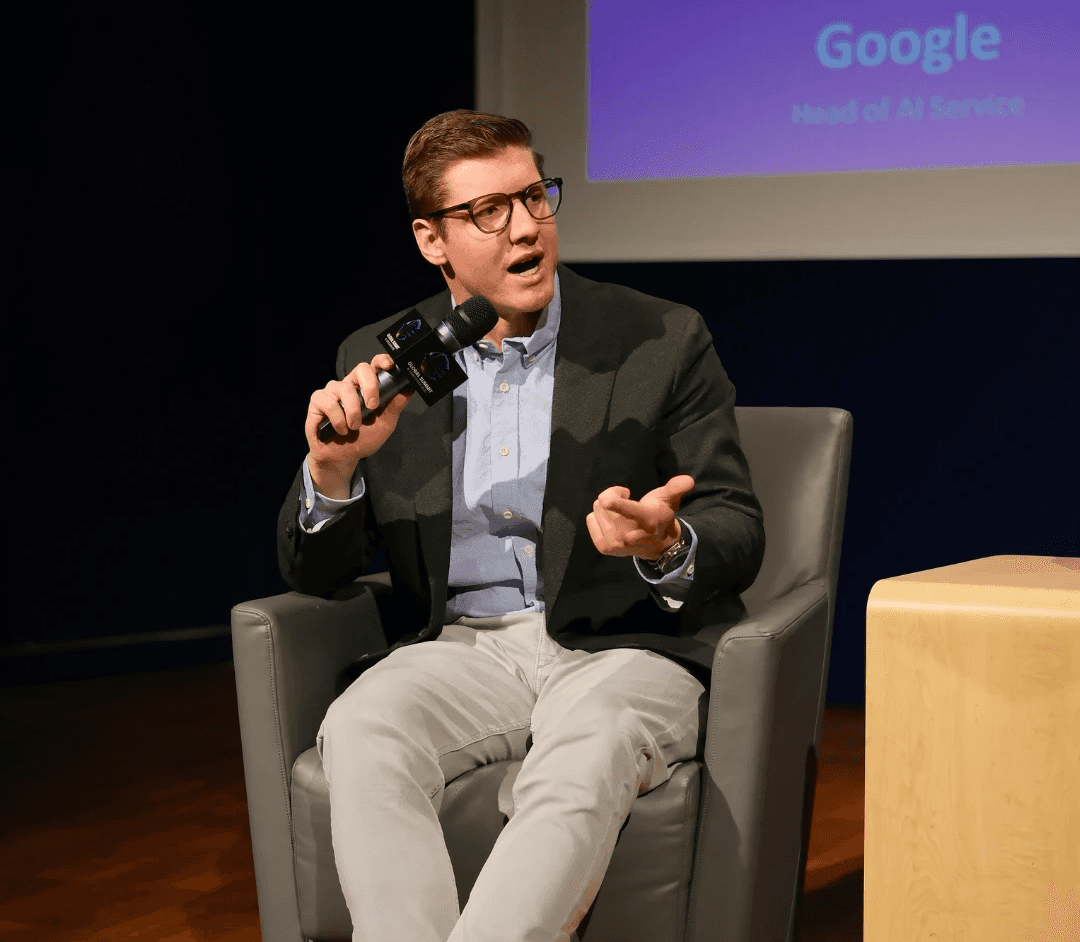

A conversation with Jamie Ulrich (Google) and Fernanda Ave at Global Summit Vancouver 2026 explored a core challenge in enterprise AI today: moving from powerful models to systems that people actually trust and use. The discussion touches on reliability beyond accuracy, why many AI projects stall between demo and production, and how data, evaluation, and process design shape real-world outcomes.

At Global Summit Vancouver 2026, a fireside conversation brought together Jamie Ulrich, Head of AI Services at Google, and Fernanda Ave to explore a question that many enterprises are still working through.

Models are improving quickly. Capabilities continue to expand. Yet turning those capabilities into systems that people actually use remains a much harder problem.

The discussion kept returning to one idea: progress in AI is increasingly constrained at the system level.

Reliability Extends Beyond Accuracy

Reliability often starts with a technical definition. Does the system produce the correct output?

In practice, there is another layer that shapes whether a system is truly usable. Users need to feel confident enough to rely on it.

These two dimensions can move in different directions. A system may perform well in controlled evaluations and still struggle to gain adoption if users do not understand how it works or feel uncertain about its behavior.

Transparency plays a central role here. When users can see how an answer is formed, including reasoning paths, data sources, or confidence levels, their willingness to engage changes. Even when the output remains the same, visibility into the process builds trust.

Tone also becomes part of the system. An assistant that presents answers with absolute certainty can quickly lose credibility when errors appear. A more calibrated response that includes uncertainty and context encourages users to stay involved in decision-making.

Reliability, in this sense, emerges from the interaction between system behavior and human perception.

Strong Models Do Not Guarantee Useful Systems

A recurring theme in the conversation was the gap between model capability and system usability.

In enterprise environments, continuously upgrading models every few months introduces instability. Systems become harder to maintain, more expensive to operate, and difficult to evaluate consistently.

There is also a shift in the nature of problems. In research settings, success is often tied to verifiable outcomes.

In real-world scenarios, many outputs are not strictly verifiable. Insight, usefulness, and relevance depend on context. This makes evaluation more complex and pushes teams to think beyond correctness as the primary metric.

Where AI Projects Break: From Demo to Production

A common pattern is the gap between a working prototype and a production system.

Teams often build a proof of concept on fragmented or imperfect data, where the system performs well enough to demonstrate potential. Once the project moves forward, attention shifts heavily toward improving the AI layer.

At scale, this approach starts to break down.

Natural language to SQL provides a clear example. A model can generate reasonable SQL queries in isolated tests. When deployed at scale, even a small error rate becomes meaningful. A one percent drop in accuracy can translate into thousands of incorrect queries in a high-volume system.

Instead of pushing the model toward perfect outputs, the more effective move often lies in restructuring the underlying data systems based on expected usage.

This shift reveals a broader pattern. Many issues attributed to models originate in the data layer.

Data Shapes the System’s Upper Bound

As more organizations gain access to similar models and talent, differentiation begins to shift.

Data plays a central role in that shift. Many systems still operate with fragmented data across CRM platforms, marketing tools, and operational systems, making it difficult to build coherent AI systems.

The conversation also pointed to changes in how data is structured and used. Approaches such as knowledge graphs are becoming more common in production environments, helping systems connect information in more flexible ways.

At the same time, proprietary data becomes a key source of advantage. What a company knows about its customers, vendors, and operations directly influences the quality of its AI systems.

AI Creates Space to Rethink the Process

Many organizations approach AI by mapping it directly onto existing workflows.

Each step is assigned to an agent, while the overall structure remains unchanged.

This can improve efficiency, though it often leaves deeper opportunities unexplored.

AI introduces capabilities that were not previously available. In hiring, for example, instead of replicating interview stages, systems can simulate real-world scenarios or evaluate how candidates handle complex interactions.

This changes how the problem itself is defined. The value comes from rethinking the process, rather than only optimizing existing steps.

Building Systems Requires Organizational Alignment

Technology alone does not determine whether AI systems succeed in practice.

Organizations that open access completely often move quickly, though they face challenges around governance and consistency. Others introduce strict processes that slow progress.

The most effective approaches tend to find a balance. People who understand the underlying business processes need to be directly involved in designing AI systems. At the same time, teams require shared standards for evaluation, tooling, and infrastructure.

Much of the capability already exists within organizations. The challenge lies in aligning it.

Evaluation and Risk Define the System in Practice

Evaluation remains one of the most difficult parts of building AI systems.

In production environments, it is not possible to predict every input a user might provide. Systems must be designed to operate under uncertainty.

One risk highlighted in the discussion is the effect of high but imperfect accuracy. A system that performs correctly most of the time, for example around 95 percent, can create a false sense of security. Users may begin to rely on it without sufficient scrutiny, increasing the impact of the remaining errors.

In contrast, systems that keep users engaged in the loop can lead to more resilient outcomes. Human involvement exists on a spectrum, and the level of oversight can shift depending on context.

Another important observation is that many failures begin with inputs that should not have been handled by the system in the first place. Identifying those cases early and routing them to humans can reduce cost, latency, and downstream risk.

Closing Reflections

Across the conversation, several themes became clear.

The center of gravity in AI is moving from models to systems.

Trust shapes whether systems are used in practice.

Data influences what systems can achieve.

The most meaningful gains come from rethinking how work is done.

As models continue to improve, the way systems are designed around them will play an increasingly important role.

Subscribe for More

We share conversations like this regularly, bringing together perspectives from builders, researchers, and operators working at the edge of applied AI.

You can find more reflections and full recaps on our blog, and stay updated on upcoming speakers and themes for the next Global Summit edition.